Why Did OpenAI Suddenly Shut Down Sora? The Rise and Retrenchment of an AI Video Product Line

Drawing on public OpenAI pages, The Guardian, The Verge, WSJ, and other industry sources, this article traces Sora's key milestones from 2024 to 2026, its technical impact, and why OpenAI chose to pull back the entire product line after Sora 2.

Updated: March 25, 2026

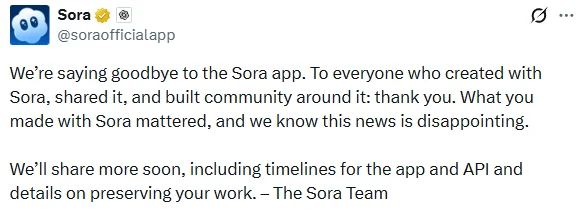

As of March 25, 2026, multiple media outlets, citing a new statement from Sora's official social account and reports about internal communications, say OpenAI has decided to shut down the Sora app, sora.com, and the related API track. Sora 2 is also no longer moving forward as a standalone product.

If you still remember the shockwave Sora created when it first appeared in February 2024, it is easy to see why this shutdown has drawn so much attention. What people saw back then was not simply that "AI can generate video too," but a product direction that seemed capable of becoming a real creative tool. By March 2026, however, the product line that once best represented OpenAI's ambitions in video had abruptly reached a point of broad retrenchment.

From the outside, the move really does feel sudden. On March 23, 2026, OpenAI was still publishing "Creating with Sora safely," explaining Sora 2's safety mechanisms, youth protections, and usage boundaries. Just one day later, several outlets began reporting that the Sora team was "saying goodbye to Sora," and that timelines for shutting down the app and API would be announced in stages. That is also why the recent conversation around Sora has been unusually messy: the news has already entered the shutdown phase, while parts of OpenAI's official site still look as if the product is active.

But if you look at Sora's history, its achievements, OpenAI's broader product roadmap, and the competitive pressure from Google Veo and ByteDance Seedance, this shutdown was not completely without warning. More accurately, it looks like a strategic pullback that was disclosed to the public only at the very end.

Key takeaways

- On

March 24, 2026, outlets including The Guardian, The Verge, Axios, and WSJ reported that OpenAI would shut down theSora app, theAPI, and the related video product line. - Those reports all pointed to the same source: a farewell message posted by Sora's official account, which said more details about the app and API timeline would follow.

- WSJ also reported that OpenAI would stop its consumer-facing Sora product, the developer-facing version, and video generation inside ChatGPT.

- At the same time, as of

March 25, 2026, some OpenAI help pages and API docs still had not fully removed older descriptions, showing a clear lag in information synchronization. - Because of that, this event should not be read as a simple product upgrade. It looks much more like a broader restructuring, or even a contraction, of OpenAI's video business.

The history of Sora: how did it get here?

1. February 15, 2024: Sora debuted and reopened the imagination around AI video

OpenAI introduced Sora for the first time in the research article "Video generation models as world simulators." The significance of that post was not just that it showed text-to-video generation. It elevated video generation into a much bigger narrative: world simulation.

The capabilities OpenAI showcased at the time included:

- High-fidelity videos up to around one minute long

- Stronger spatial consistency and subject continuity

- Extending from images into video

- Better control over complex camera motion and scene changes

What truly made Sora hit hard was that it was the first time many people broadly recognized that AI video was no longer just "animated slides." It looked like something that could become a genuine creative tool.

2. March 25, 2024: OpenAI began moving Sora into creator workflows

In "Sora: first impressions," OpenAI invited artists, directors, and designers to share early experiences. This step mattered because it moved Sora from a model showcase into validation inside real creative workflows.

Those early examples showed that Sora's value was not limited to generating a finished clip. It also helped with:

- Quickly visualizing concepts and storyboards

- Iterating on surreal scenes at lower cost

- Previewing style and narrative direction before filming

That also shaped how Sora later influenced the industry: it was not just a model benchmark contender, but part of the creative workflow itself.

3. December 9, 2024: Sora moved from a research preview to a real product

In "Sora is here," OpenAI announced Sora's formal launch and introduced the faster Sora Turbo.

By this point, Sora had taken on a full product form:

- A standalone website at

sora.com - Access for ChatGPT Plus and Pro users

- Support for up to

1080pand videos as long as20 seconds - Tools such as remix, blend, and storyboard

- A feed designed to strengthen the loop of generation, sharing, and re-creation

Released the same day, the Sora System Card also showed that OpenAI was now treating Sora as a real high-risk product, not just a technical demo. The system card said the company conducted external red-teaming across nine countries before launch, with more than 15,000 generations in total.

4. September 30, 2025: Sora 2 arrived and OpenAI began betting on mobile and unified audio-video creation

On September 30, 2025, OpenAI published "Sora 2 is here."

The focus of this upgrade was no longer just "better video," but a more complete media-generation platform:

- More realistic physics

- Higher controllability

- Synchronized audio, dialogue, and sound effects

- A new Sora app

OpenAI even described the original Sora in that post as "GPT-1 for video." That line matters because it shows OpenAI itself understood: Sora 1 was a groundbreaking starting point, but not the final stable form.

5. Late 2025 to early 2026: Sora kept iterating and, for a while, still looked promising

According to OpenAI's Sora Release Notes and engineering posts, Sora continued to update at a rapid pace:

November 4, 2025: Android app launchNovember 24, 2025: More video styles addedFebruary 4, 2026: Image-to-video driven by human photosFebruary 9, 2026: Video extensions added

In an official OpenAI post from December 2025, the company also said the Sora Android app reached No. 1 on the Play Store on launch day, with users generating more than 1 million videos in the first 24 hours.

At least at that stage, Sora was not some ignored failed product. It had clearly shown real user appeal.

6. March 23 to 24, 2026: just one day separated "still talking about safety" from "public farewell"

What surprised outside observers most was the pace of the final turn.

On March 23, 2026, OpenAI was still publishing "Creating with Sora safely," continuing to emphasize:

- Sora 2 support for safety profiles

- Stricter restrictions for teen users

- Stronger guardrails around real people, copyrighted characters, and high-risk content

But by March 24, 2026, outlets including The Guardian, The Verge, Axios, and WSJ were already reporting, based on Sora's official social account and internal information, that:

- OpenAI was saying goodbye to Sora

- It would later publish app and API shutdown timelines

- Video generation would no longer continue under the current product line

From that timeline, Sora was clearly not being wound down slowly. The internal strategic decision happened first, and the outside world only felt the result all at once afterward.

What did Sora actually accomplish?

If we reduce Sora today to "a product that was later shut down," we seriously understate its place in history.

1. It pushed AI video from "moving images" toward real creation

Sora's biggest achievement was not that a particular demo looked amazing. It raised the standard for the entire industry. Before Sora, many AI video products still felt like this:

- Short clips

- Weak consistency

- Frequent visual breakdowns

- Limited editability

At least in public perception, Sora changed that. For the first time, "Can AI video enter real workflows?" became a serious question.

2. It gave OpenAI early narrative ownership in AI video generation

For a large part of 2024, Sora was effectively shorthand for "OpenAI's video capability." The company that defines the industry's narrative early usually wins a major branding advantage, and Sora did that with very little dispute.

It also expanded how people saw OpenAI, from text and images into a possible gateway for future media generation.

3. It pushed safety, provenance, and governance standards forward

At least in its public positioning, OpenAI was earlier than many rivals in writing watermarking, C2PA metadata, age restrictions, consent for real people, and high-risk content protections into product design.

These mechanisms were not perfect, but they did force the industry to confront a basic reality: the risks of video generation are much higher than those of ordinary text generation.

4. It proved AI video has a consumer market

Sora Android's quick rise to the top and the one-million-video first day suggest this was not a tool only for developers or creative professionals. Everyday users were willing to use it for content experiments, entertainment, and social expression too.

That is another important part of Sora's legacy: it helped confirm that AI video is not only a professional tool category, but could also become a mainstream consumer content product.

Sora's influence is likely to outlast the product itself

1. It brought the "world model" narrative into the mainstream

From day one, Sora was never framed purely as a video tool.

By using the term world simulators, OpenAI connected video models to a broader conversation about world understanding, physical modeling, and embodied intelligence.

Whether or not that narrative has already been fulfilled, Sora at minimum changed how people talk about the space. After that, video models were no longer seen only as content generators, but also as one path toward more complex AI capabilities.

2. It pushed AI video competition from "single output" to "complete workflow"

Sora's later roadmap had become quite clear:

- remix

- blend

- storyboard

- feed

- image-to-video

- extensions

- synchronized audio-video generation

That means the center of competition had already shifted from "who can make the most impressive short clip" to "who can turn generation, editing, extension, sharing, and reuse into a complete workflow."

3. It forced competitors to accelerate

It is hard to argue that the speed-ups from Google, ByteDance, Runway, Luma, and Pika had nothing to do with Sora. Google and ByteDance in particular both moved quickly toward platformization:

- On

July 10, 2025, Google said Veo 3 had generated more than40 millionvideos within seven weeks of launching in Gemini and Flow. - ByteDance's

Seedance 1.5 proandSeedance 2.0made synchronized audio-video generation, multimodal reference inputs, video extension, and industrial content scenarios into core selling points.

Sora's influence was not only about what it did itself. It was also about how it forced competitors to move faster.

Why did OpenAI suddenly shut down Sora? I see 5 core reasons

This section is an analytical judgment based on public information, not a list of reasons formally published by OpenAI.

1. OpenAI's center of gravity has clearly shifted toward a "super assistant," enterprise products, and longer-term research

Axios reported that OpenAI planned to shut down Sora in order to redirect priorities toward bigger goals, such as the "super assistant" Sam Altman has mentioned. The Guardian also cited an OpenAI spokesperson saying the company was shifting focus toward "other research and product priorities," including robotics and world simulation.

That suggests OpenAI's internal view of resource allocation has changed. Sora may still matter, but it may no longer be seen as the front-end product line most worth sustained standalone investment.

2. Video generation is expensive, and Sora may never have built a durable standalone business model

Video generation is naturally more demanding than text generation in compute, bandwidth, and storage, and it is also harder to compress latency. From a business perspective, Sora consistently faced several practical issues:

- High inference costs

- High user expectations, without guaranteed willingness to pay

- A community feed that further increases moderation and storage costs

- A heavy organizational burden from maintaining the standalone app, website, API, and ChatGPT integration at the same time

If a business line is both expensive and lacking a clear revenue loop, it becomes an easy candidate for retrenchment once a company starts prioritizing capital efficiency and its main corridor of bets.

3. OpenAI may have realized that "standalone Sora" was not the best product shape

This may be one of the most revealing parts of the story. When Sora was being shut down, OpenAI's own site still contained all of these signals at once:

- Some pages still talked about

Sora 2,sora.com, the iOS app, and the Android app - The API docs still kept preview descriptions for video generation

- A brand-new safety article had just gone live

That information mismatch itself points to a problem: Sora's product boundary was fragmented for a long time.

Was it:

- A standalone creative app?

- A video model brand?

- A developer API?

- A capability inside ChatGPT?

If OpenAI ultimately decided to remove all of those layers, that also suggests it concluded the organizational and product structure behind "standalone Sora" no longer made sense.

4. Rising competition from Seedance, Veo, and others significantly reduced Sora's uniqueness

This was probably not the only reason, but it was almost certainly an important one.

ByteDance's official Seedance 2.0 page shows support for mixed multimodal input across text, image, audio, and video, while highlighting:

- Synchronized audio-video generation

- Multiple reference inputs

- Video editing and extension

- Production-grade scenarios for film, advertising, ecommerce, and games

More importantly, ByteDance does not just have a model. It also has Doubao, Jimeng AI, Volcano Engine, and a broader distribution system. That means the threat from Seedance is not simply "the model is good." It is "the model, the tools, the user entry points, and the business platform are all advancing together."

Google's Veo strategy looks similar. When Veo can quickly reach users through Gemini, Flow, and a more mature product matrix, it becomes much harder for Sora as a standalone product to defend a long-term edge.

In other words, Sora initially won attention through sheer wow factor, but later the competition changed into a battle over who could turn video generation into platform capability. At that stage, standalone Sora may no longer have been OpenAI's optimal shape.

5. Safety, copyright, and real-person risks may have pushed OpenAI toward reducing front-end exposure

The risk surface for video generation is obviously more complex than for text:

- Deepfakes are more convincing

- Real faces and voices are more sensitive

- Copyrighted characters, film styles, and source materials are harder to adjudicate

- Unified audio-video generation increases the risk of misinformation

In its March 23, 2026 safety article, OpenAI was still emphasizing teen restrictions, limits on the use of real people, and protections around high-risk content. That suggests Sora's governance burden was not going away. It was rising as the product became more capable.

When a product line simultaneously faces high costs, intense competition, and heavy governance risk, a full shutdown becomes much easier to understand.

Does this mean Sora failed?

I would not read it that way. More accurately: Sora was successful as a groundbreaking product, but it may not have succeeded as a long-term standalone business.

It achieved three things that are hard to erase:

- It reset public expectations for what AI video could be.

- It gave OpenAI the earliest and strongest brand-definition advantage in the video race.

- It pushed the whole industry from flashy sample clips toward workflows, platforms, and multimodal control.

If Sora did not become OpenAI's long-term standalone product, that does not mean it lacked value. It looks more like a transitional product that pushed the industry into its next phase.

The real question: why didn't OpenAI keep building Sora into a platform?

That may be the most interesting part of the whole story.

OpenAI clearly had:

- Early brand momentum

- Relatively mature model capability

- Mobile apps

- The beginnings of an API

- A creator community foundation

And yet it still chose a broad shutdown. That suggests that in OpenAI's current strategic ranking, Sora's priority ultimately gave way to something else. That could be the "super assistant," larger enterprise products, or robotics and world-simulation research itself, rather than the long-term operation of a video app.

Seen from that angle, Sora's closure is not just product news. It is also a signal: OpenAI is redefining which kinds of products are worth operating directly over the long term, and which capabilities are better left at the model layer, the research layer, or set aside in favor of other strategic priorities.

Conclusion

Sora's story was short, but highly representative.

In 2024, it reignited the industry's imagination around AI video and helped OpenAI establish an early dominant narrative in video generation. In 2025, it continued along the path toward productization, mobile expansion, and unified audio-video creation. But by 2026, OpenAI ultimately chose not to keep running it as a long-term platform. Instead, amid sharper competition, high costs, and a broader strategic reshuffle, it shut down the entire front-end product line.

So Sora's problem may never have been "the technology was not good enough," but something else: once AI video stopped being a dazzling demo and became a real business with high costs, fierce competition, and heavy governance demands, OpenAI may have concluded that this was no longer a front-end product line worth devoting major resources to.

FAQ

Is only Sora 1 being sunset, or is all of Sora being shut down?

As of March 25, 2026, multiple media reports based on Sora's official social announcement and internal information say the scope is not limited to Sora 1. The Sora app, sora.com, the API, and the broader related video product line have all entered the shutdown process.

However, some older pages on OpenAI's site still have not fully synced, which is why the public information looks contradictory.

Is Sora 2 being shut down too?

Based on current media reporting, yes.

This is not a simple "Sora 1 sunset." The app track associated with Sora 2 is also being wound down. The more detailed timeline still depends on future announcements from OpenAI.

Does this mean OpenAI is giving up on video-generation research?

It is too early to conclude that. The reporting points more toward "closing the current Sora product line" than "ending all video-generation research." In fact, some reports suggest OpenAI may shift focus toward broader research and product goals, such as robotics, world simulation, or a super assistant.

References

- OpenAI: Video generation models as world simulators (2024-02-15)

- OpenAI: Sora: first impressions (2024-03-25)

- OpenAI: Sora is here (2024-12-09)

- OpenAI: Sora System Card (2024-12-09)

- OpenAI: Sora 2 is here (2025-09-30)

- OpenAI: Sora - Release Notes (still accessible as of 2026-03-25)

- OpenAI: Sora 1 Sunset - FAQ (still accessible as of 2026-03-25)

- OpenAI: Creating with Sora safely (2026-03-23)

- OpenAI: How we used Codex to build Sora for Android in 28 days (2025-12-12)

- The Guardian: OpenAI to shut down Sora in apparent AI video retreat (2026-03-24)

- The Verge: OpenAI is shutting down Sora as Sam Altman pursues a 'super assistant' (2026-03-24)

- Axios: OpenAI to shut down Sora (2026-03-24)

- The Wall Street Journal: OpenAI Is Shutting Down Sora as Altman Focuses on Superapp (2026-03-24)

- Reddit: What's going on with OpenAI's Sora shutting down? (2026-03-24)

- ByteDance Seed: Seedance 1.5 pro (2025-12)

- ByteDance Seed: Seedance 2.0 (2026-02-12)

- Google: Turn your photos into videos in Gemini (2025-07-10)

Source Notice

This article is published by merchmindai.net. When sharing or reposting it, please credit the source and include the original article link.

Original article:https://merchmindai.net/blog/en/post/openai-sora-shutdown-analysis