China’s LLM Landscape in 2026: How Models, Products, and Ecosystems Are Being Reordered

Drawing on Reddit discussions, official model releases, QuestMobile reports, and regulatory data, this article maps the real shape of China’s LLM market as of March 2026.

Updated: March 24, 2026

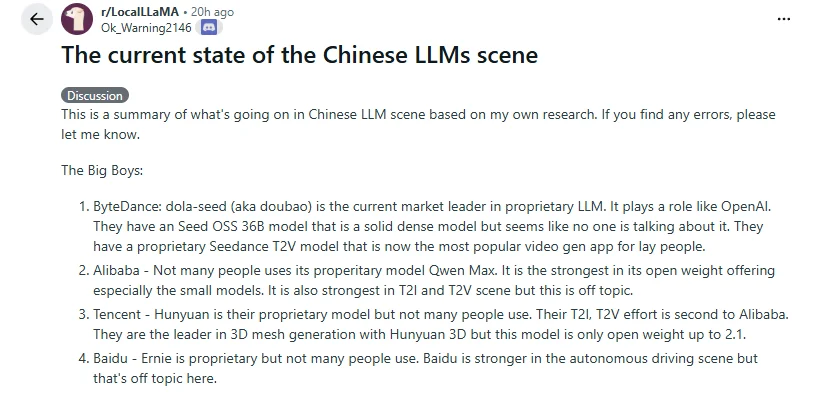

This article starts from a Reddit post on r/LocalLLaMA: The current state of the Chinese LLMs scene. The post groups Chinese players into three broad layers: the big tech companies, DeepSeek, and the so-called “Six Tigers” of AI startups. That framing works well as a quick primer, but if we stop at the forum view, we miss the more important reality: China’s LLM competition is no longer a single leaderboard race. It is now a multi-front contest shaped by user access, open-source models, commercialization, agent workflows, and the combined pressure of regulation and compute constraints.

This article starts from a Reddit post on r/LocalLLaMA: The current state of the Chinese LLMs scene. The post groups Chinese players into three broad layers: the big tech companies, DeepSeek, and the so-called “Six Tigers” of AI startups. That framing works well as a quick primer, but if we stop at the forum view, we miss the more important reality: China’s LLM competition is no longer a single leaderboard race. It is now a multi-front contest shaped by user access, open-source models, commercialization, agent workflows, and the combined pressure of regulation and compute constraints.

If you only follow LocalLLaMA, it is easy to conclude that “DeepSeek and Qwen define almost everything.” If you only look at domestic app usage data, you might come away thinking “Doubao is the overwhelming winner.” Neither view is exactly wrong, but they describe different layers of the market. China’s LLM landscape today looks less like a one-dimensional ranking and more like a layered map that is being redrawn at high speed.

Key takeaways

- At the consumer entry-point layer, ByteDance’s Doubao remains one of the strongest players, and its edge comes primarily from distribution and product reach rather than from model buzz inside forums.

- In open source and developer ecosystems, Alibaba’s Qwen and DeepSeek still define the two most globally influential lines.

- Startups are not out of the game, but the path to winning has shifted from “build a general-purpose chat model” to “differentiate around agents, coding, long context, multimodality, or vertical use cases.”

- A fast-forming new battlefield is the Claude Code-style coding agent workflow, along with the broader agent framework ecosystem around OpenClaw. The real question is who becomes the default model backend inside terminals, IDEs, and toolchains.

- The real barrier in China’s LLM market is increasingly no longer “who can train a model,” but “who can turn that model into a real product, launch it compliantly, and absorb long-term inference and compute costs.”

Why Reddit gets only half the picture

The Reddit post titled The current state of the Chinese LLMs scene did capture two important truths.

First, Chinese vendors really have been unusually aggressive in the cadence of open releases. From 2025 into 2026, new open weights, technical reports, reasoning models, and agent models have appeared almost every few weeks. Qwen, DeepSeek, Kimi, GLM, MiniMax, StepFun, ByteDance Seed, and Xiaomi’s MiMo, which the Reddit thread did not fully unpack, have all kept shipping updates. On that point, the forum view is fair.

Second, China’s market genuinely has a dual structure in which big tech companies and high-profile startups coexist. That already makes it feel different from the U.S. market, where public attention is often concentrated on a much smaller number of frontier model vendors.

Where that framing remains incomplete is this: there is no single dimension along which “the strongest” Chinese LLM company can be identified.

- If you ask who reaches the most mainstream users inside China, the answer points more toward Doubao.

- If you ask who has the greatest influence in the global open-source community, the answer usually lands on Qwen and DeepSeek.

- If you ask who is best positioned to embed models into existing business scenarios, Tencent, Alibaba, Baidu, and ByteDance have much stronger advantages than forum discussion usually reflects.

- If you ask who still has the best chance of breaking out through a sharp technical edge, the answer swings back toward startups like Kimi, GLM, MiniMax, and StepFun.

So instead of treating China’s LLM market as a single ranking, it is more accurate to see it as a layered map. User access, base models, ecosystems, toolchains, and commercialization are all being reordered, but not at the same speed.

1. Start with the most overlooked layer: user access

As of June 2025, China already had 515 million generative AI users, with a penetration rate of 36.5%. That means LLM competition in China is no longer a niche conversation inside tech circles. It has already entered the mass-market stage.

Source: Xinhua via China.gov.cn, 2025-10-18

QuestMobile’s AI application-layer report, published on March 3, 2026, adds another important set of numbers. As of December 2025, mobile AI apps in China had 722 million monthly active users, AI assistants from smartphone vendors had 559 million, and PC AI apps had 205 million. The same report also notes that after overtaking DeepSeek in August 2025, Doubao held onto the No. 1 position.

Source: QuestMobile, 2026-03-03

Looking at the market in more detail, a 199IT / Sina Tech write-up citing QuestMobile data says that by February 4, 2026, Doubao had reached 227 million users in the native AIGC application category, leading DeepSeek by nearly 100 million users. DeepSeek, however, recorded 1250.7% year-over-year growth on the web, which suggests far stronger penetration among developers and high-frequency productivity users.

Source: Sina Tech citing QuestMobile, 2026-02-04

These numbers tell us something important: the winners at the user-entry layer are not the same as the winners in the open-source community.

Doubao is strong not just because of the model itself, but because it sits behind Douyin, CapCut, Volcano Engine, and a broader distribution machine. DeepSeek’s strength, by contrast, is more visible in technical brand recognition, developer mindshare, and habits formed around web and API usage. They are not competing on exactly the same layer.

2. In open source, Chinese vendors really have become the most active major bloc

Once we shift the lens back to the model community, Reddit’s instincts start to look much more accurate.

Qwen is still one of the steadiest open foundations, but the product center of gravity has moved to Qwen3.5

When Alibaba released Qwen3 on April 29, 2025, it launched two MoE lines and six dense models at once, while explicitly opening the weights under the Apache 2.0 license. Qwen3-235B-A22B came with 235B total parameters and 22B active parameters, while Qwen3-30B-A3B kept pushing the small-but-efficient route.

Source: Qwen3 official blog, 2025-04-29

Then, on July 22, 2025, Alibaba released Qwen3-Coder-480B-A35B-Instruct, with native 256K context expandable to 1M, and designed directly around developer workflows such as Claude Code, Cline, and OpenAI-compatible interfaces.

Source: Qwen3-Coder official blog, 2025-07-22

But if we move the clock forward to March 2026, it is no longer enough to describe Qwen externally through Qwen3 alone. Alibaba Cloud’s public documentation shows that:

- The Qwen3.5 series has become the latest main line for visual understanding and hybrid reasoning. The docs present

qwen3.5-plusandqwen3.5-flashas thought-enabled by default, while the open side listsqwen3.5-397b-a17b,qwen3.5-122b-a10b,qwen3.5-27b, andqwen3.5-35b-a3b.

Source: Alibaba Cloud Model Studio: Deep Thinking - Qwen3.5 is also the native multimodal line. Alibaba Cloud’s vision documentation explicitly describes Qwen3.5 as “the latest generation of visual understanding models,” with

qwen3.5-pluspositioned as the highest-performance and recommended default option.

Source: Alibaba Cloud Model Studio: Vision with Qwen3.5 - On the commercial flagship side,

qwen3-max-2026-01-23is still listed in Alibaba’s public docs as an available Max-tier model, alongsideqwen3.5-plusin the latest supported-model matrix.

Source: Alibaba Cloud Model Studio: Claude Code integration

In other words, Qwen3 was a major starting point for this wave of open-source momentum, but as of March 2026, Alibaba’s real product focus is better described as a three-part stack: “Qwen3 as the open foundation, Qwen3.5 as the native multimodal line, and qwen3-max as the commercial flagship.”

DeepSeek remains one of the most influential technical brands

When DeepSeek-R1 launched on January 20, 2025, the company explicitly stated that both the model and the code were released under the MIT License, and that API outputs could be used for fine-tuning and distillation.

Source: DeepSeek-R1 official announcement, 2025-01-20

The significance of that launch was not simply that another strong model arrived. It was that DeepSeek pushed low-cost inference, open distillation, and the reinforcement-learning narrative to the center of industry discussion. Whether or not a company actually deploys DeepSeek, the whole market began taking the question of “can open reasoning models become the commercial default?” much more seriously afterward.

Startups have not fallen behind, and their model lines are still moving forward

If you only follow forum attention, it is easy to misread the market as “everyone except Qwen and DeepSeek is just tagging along.” The official release cadence does not support that conclusion.

- Moonshot / Kimi K2.5: Kimi K2.5 has already replaced the earlier K2. The official project describes it as an open-source, native multimodal agentic model, trained with roughly 15T mixed vision-and-text tokens on top of K2-Base. It has 1T total parameters, 32B active parameters, and supports 256K context.

Source: MoonshotAI/Kimi-K2.5 on GitHub - Z.ai / GLM-5: Zhipu’s flagship has already moved from GLM-4.5 to GLM-5. Official documentation positions it as a flagship base model for Agentic Engineering, with 200K context, 128K maximum output, and a core architecture expanded to 744B total parameters and 40B active parameters.

Source: Zhipu AI docs: GLM-5 - MiniMax-M2.7: As of March 18, 2026, MiniMax’s latest text flagship had already advanced to MiniMax M2.7. The company describes it as the first model to “deeply participate in its own evolution,” emphasizing complex agent harnesses, Agent Teams, dynamic tool search, software engineering, and professional office work. The announcement reports benchmarks including SWE-Pro 56.22%, VIBE-Pro 55.6%, and Terminal Bench 2 57.0%.

Source: MiniMax official news: MiniMax M2.7 - StepFun / Step-3.5-Flash: Based on official docs and model cards from late February 2026, StepFun’s most notable open model is still step-3.5-flash. StepFun calls it “the most powerful open-source base model,” with 256K context and 196B total parameters / 11B active parameters, and it is already documented for Claude Code, Codex, and broader agent platform integrations.

Source: StepFun model overview

Source: Step 3.5 Flash model card - Xiaomi / MiMo-V2-Pro and MiMo-V2-Omni: Xiaomi has not sat out this race, and its lineup has already advanced to MiMo-V2-Pro and MiMo-V2-Omni.

MiMo-V2-Prois presented as a flagship base model for real agent workloads, with more than 1T parameters, 42B active parameters, 1M token context, and an explicit push from coding towardclaw.MiMo-V2-Omni, meanwhile, is a unified multimodal agent foundation spanning image, video, audio, and text, with native structured tool calling, function execution, and UI grounding.

Source: Xiaomi MiMo-V2-Pro official page

Source: Xiaomi MiMo-V2-Omni official page

Taken together, these official releases support a more realistic conclusion: China’s open LLM ecosystem is not simply “Qwen and DeepSeek dominate while everyone else has disappeared.” It is a supply structure with two top leaders and a wider group of fast-moving followers that are still iterating aggressively. And those follow-on releases have not stopped in mid-2025. Xiaomi’s MiMo alone is enough to show that “Chinese LLMs” should not be reduced to the usual list of AI startups.

Big tech is also moving fast, just with a different playbook

One additional clarification matters here: ByteDance should not be grouped under “startups.” It is more accurate to say that ByteDance, Tencent, Baidu, and Alibaba are advancing through a different strategy: fast iteration on the commercial front line, combined with selective openness around some models or tooling.

- ByteDance / Seed 2.0 and Seed-OSS: As of February 14, 2026, ByteDance’s latest general-purpose line had already moved to Seed 2.0. The official page describes it as a family of general-purpose agent models in Pro, Lite, and Mini sizes, built for large-scale production deployment, multimodal understanding, long-horizon tasks, and economically valuable workloads.

However, as of March 24, 2026, the official public materials I could verify do not show a public weights repository released alongside Seed 2.0. ByteDance’s clearly open main lines remain Seed-OSS and Seed1.5-VL. That judgment is based on the official Seed 2.0 launch materials and the ByteDance Seed GitHub organization page.

Source: ByteDance Seed 2.0 official page

Source: Seed 2.0 Official Launch

Source: ByteDance-Seed GitHub organization

Source: ByteDance-Seed/seed-oss on GitHub - Tencent and Baidu show a similar pattern. They are moving quickly in commercial APIs, enterprise integration, agent tooling, and cloud platform bundling, even if forum mindshare is less concentrated around them than it is around open-source names like Qwen or DeepSeek.

3. The real battleground has already shifted from “chat quality” to agents and workflows

In 2024, most of the conversation was still about chat experience and benchmark scores. By 2025 and 2026, the center of gravity had clearly moved to the right.

One obvious sign is that nearly every new model release now highlights the same cluster of terms:

agentic codingtool useOpenAI-compatible APIAnthropic-compatible APIlong contextmultimodal reasoning

For example, Qwen3-Coder was built with direct support for Claude Code and Cline in mind. Tencent Hunyuan, meanwhile, published both OpenAI-compatible and Anthropic-compatible API documentation in January 2026.

Source: Tencent Hunyuan OpenAI-compatible API docs

Source: Tencent Hunyuan Anthropic-compatible API docs

Alibaba’s latest documentation is pushing in the same direction. In Model Studio and Coding Plan documentation from March 2026, models such as qwen3.5-plus, kimi-k2.5, glm-5, and MiniMax-M2.5 are already organized as models meant to plug into coding tools, not just web chat products. That also highlights a practical reality: a model vendor’s newest flagship and the exact version currently exposed through third-party cloud access do not always move in lockstep.

Source: Alibaba Cloud Model Studio: Coding Plan overview

More precisely, two trajectories are now converging. One is the Claude Code-style coding agent workflow. The other is the broader agent evaluation and framework ecosystem represented by OpenClaw, ClawEval, and PinchBench. In other words, vendors are not just competing over who can write code. They are competing over who can own an executable workflow end to end. Recent moves by Chinese vendors make that increasingly obvious:

- Alibaba Cloud Model Studio now provides direct Claude Code integration docs, and the examples explicitly point

ANTHROPIC_BASE_URLandANTHROPIC_MODELtoqwen3.5-plus.

Source: Alibaba Cloud Model Studio: Claude Code docs - Zhipu GLM does more than merely support Claude Code. Its official FAQ explicitly documents model mapping for

GLM Coding Planwith Claude Code, and says that Max and Pro plans already supportGLM-5.

Source: Zhipu AI: Claude Code docs

Source: Zhipu AI: GLM Coding Plan FAQ - Kimi may not present “Claude Code compatibility” as its loudest headline, but its public posts keep circling around

Agentic Coding, tool use, MCP servers, and Kimi Playground.

Source: Kimi K2 update: stronger coding capabilities

Source: Kimi Playground: tool-use capabilities - StepFun goes so far as to give

Claude Code & Codexits own section in the model card. That suggests agent compatibility is not being treated as a side feature, but as a core selling point.

Source: Step 3.5 Flash model card - Baidu is taking a more infrastructure-oriented route. Baidu Qianfan now offers a

Coding Planfor tools such as Claude Code, while Baidu Cloud docs also keep promoting fast deployment of OpenClaw into enterprise WeChat, QQ, DingTalk, and related scenarios.

Source: Baidu Qianfan Coding Plan

Source: Quickly deploy OpenClaw - Xiaomi MiMo-V2-Pro / Omni look like a direct bet on coding, claw, and multimodal agent workflows from the model-foundation layer itself. The former emphasizes large-scale agent tasks and ultra-long context, while the latter extends the stack to image, video, audio, and UI grounding.

Source: Xiaomi MiMo-V2-Pro official page

Xiaomi is also unusually explicit about this direction. The MiMo-V2-Pro page literally says “generalizing from coding to claw,” and describes OpenClaw as a general agent framework that is heating up rapidly in the open-source community. The same page reports PinchBench #3 globally and ClawEval #3 globally. Meanwhile, MiMo-V2-Omni extends that route beyond text and code into image, video, audio, and UI grounding. That makes the broader point clearer: in China, the conversation is no longer only about code completion. It is about more general executable agents.

Source: Xiaomi MiMo-V2-Pro official page

Source: Xiaomi MiMo-V2-Omni official page

The significance of this shift is straightforward: Chinese vendors are no longer satisfied with being able to say “our model scores well.” They are now fighting for a more concrete position: who becomes the default backend for Claude Code, Cline, MCP, terminal agents, and enterprise coding assistants as a full workflow stack.

Behind that is an even larger trend: what Chinese LLM vendors are really competing for today is not just position on a model leaderboard, but ownership of the developer access layer.

Whoever gets into IDEs, agent frameworks, enterprise workflows, office collaboration suites, and cloud platforms first has a better chance of turning model capability into sustainable revenue. A chatbot that “answers pretty well” is no longer enough.

4. Why big tech is gaining more structural advantage

Reddit discussions often place big tech firms and startups side by side as if they were playing the same game. In reality, as model quality converges and costs and compliance pressure rise, the structural advantages of large incumbents are being amplified again.

Big tech companies own a more complete commercial loop

Take ByteDance as an example. In April 2025, Volcano Engine disclosed that as of the end of March 2025, daily token calls on the Doubao large model had already exceeded 12.7 trillion, three times the level from December 2024 and 106 times the level a year earlier. By the time Doubao 2.0 launched, the company further said daily token usage had grown by more than 500 times compared with the initial launch period.

Source: Doubao 1.5 Deep Thinking release, 2025

Source: Doubao 2.0 official launch

That means ByteDance no longer simply “has a strong model.” It already has a full commercial loop around that model:

- consumer app entry points

- an enterprise API platform

- cloud infrastructure

- a multimodal product matrix

- integration with an existing content ecosystem

If we move forward to March 2026, the major product lines of several large Chinese tech companies can be summarized roughly as follows:

- ByteDance: the latest general-purpose line is Seed 2.0, built around Pro / Lite / Mini agent models and real production deployment. But the clearly open public line remains Seed-OSS / Seed1.5-VL.

- Tencent: Tencent Cloud’s product overview shows the latest general text generation line has already advanced to Tencent HY 2.0 Think and Tencent HY 2.0 Instruct. On the reasoning side, there is also

hunyuan-t1-latest, while the open side includes two visible MoE lines: Hunyuan-A13B and Hunyuan-Large.

Source: Tencent Hunyuan product overview

Source: Tencent-Hunyuan/Hunyuan-A13B

Source: Tencent-Hunyuan-Large - Baidu: on the commercial front, the main stack is already ERNIE 5.0 official release / ERNIE 5.0 Thinking Preview / ERNIE X1.1 Preview / ERNIE 4.5 Turbo. Baidu defines ERNIE 5.0 as a “native full-modality large model,” while the major public open line still centers mainly on the ERNIE 4.5 family.

Source: Baidu Qianfan model services page

Source: ERNIE 5.0 Preview news

Source: PaddlePaddle/ERNIE on GitHub

Alibaba, Tencent, and Baidu are all moving in the same broad direction. For them, LLMs are not a standalone business. They are something that can be embedded into e-commerce, search, social products, office software, cloud services, content distribution, and even hardware entry points.

One small but telling detail: after March 20, 2026, Alibaba Cloud stopped accepting new purchases for Coding Plan Lite, and current public documentation mainly pushes the Pro tier. That detail also suggests that model competition is no longer just about “who is stronger,” but about “who can package the strongest models into a stable supply system for developers and enterprises.”

Source: Alibaba Cloud Model Studio: Coding Plan overview

Startups now need a much sharper definition of themselves

That does not mean startups have no opportunity. It means the opportunity no longer comes from saying, “we also have a general-purpose model.”

The more realistic paths now tend to be:

- make a sharp breakthrough in coding, agents, long context, or multimodality

- trade open weights for developer ecosystem traction and brand visibility

- use lower inference costs to break into the API market

- build revenue through vertical deployment, private delivery, or enterprise solutions

In other words, startups are still at the table, but the center of competition has moved from “build a model” to “find a landing point that can actually scale.”

5. Regulation and compute are not background conditions. They are shaping forces.

English-language discussions often explain China’s LLM progress mainly through “engineering execution” or “open-source strategy.” But if we ignore policy and compute constraints, the picture becomes distorted.

Regulation has not stopped growth, but it has deeply shaped product form

On January 9, 2026, the Cyberspace Administration of China announced that as of December 31, 2025, a cumulative total of 748 generative AI services had completed filing, and another 435 applications or functions had completed registration.

Source: CAC announcement, 2026-01-09

That means China is not a market where any model can immediately scale the moment it works. Filing, registration, launch disclosure, and scenario-specific compliance are built into the process. The result is that:

- large companies are better able to absorb compliance costs

- cloud vendors are more likely to become the base infrastructure of the industry

- public-facing model products place more weight on stability, controllability, and deployable use cases

Compute constraints are also pushing the market toward efficiency

In its earnings release on May 28, 2025, NVIDIA disclosed that the U.S. government had informed the company on April 9, 2025, that exporting H20 products to China would require a license. As a result, NVIDIA recorded $4.5 billion in related charges in fiscal Q1 2026, and another $2.5 billion in expected revenue could not be shipped.

Source: NVIDIA fiscal Q1 2026 results

That does not mean Chinese companies can no longer keep training models. But it does help explain why Chinese vendors in 2025 and 2026 have been so committed to these directions:

- sparse MoE

- lower active parameter counts

- more efficient long-context inference

- open-sourcing models so the community can help optimize deployment

So one defining feature of China’s LLM market is this: it is not only competing on capability, but on efficiency.

6. My reading of China’s LLM landscape as of March 2026

If I had to summarize the market in one sentence, it would be this:

China’s LLM story is not about finding “the Chinese OpenAI.” It is about who can win at the same time across models, distribution, toolchains, and compliance.

More specifically:

- Doubao looks like one of the strongest consumer entry points in China right now.

- ByteDance Seed has already moved its latest general-purpose front line to Seed 2.0, but the main public open line is still Seed-OSS.

- Qwen looks more like the shared public foundation for China’s open models and developer ecosystem, although the product front line has already extended from Qwen3 to Qwen3.5 Plus / Max.

- DeepSeek remains the clearest open reasoning model with major technical brand power.

- Tencent Hunyuan 2.0 and ERNIE 5.0 / X1.1 Preview deserve more attention at the level of real business deployment and enterprise access. They may not dominate forum discussion, but they are far from marginal.

- Kimi K2.5, GLM-5, MiniMax-M2.7, StepFun, and Xiaomi MiMo-V2-Pro / Omni are still in the game and could continue to break through in coding, agents, long context, or multimodality.

- Tencent and Baidu may not be the hottest names in forum discussion, but they remain very difficult to underestimate once real-world business scenarios and distribution systems are considered.

That is why I do not fully agree with oversimplified takes like “the Six Tigers will all disappear soon.” A more accurate way to put it might be this:

General-purpose chat models will become more crowded, but the next stratification around agents, tool use, vertical industries, enterprise delivery, and multimodal workflows is still far from settled.

FAQ

Who is actually the strongest Chinese LLM player right now?

That depends on what kind of “strength” you mean.

- If you care about mainstream user access in China, Doubao has the clearest lead.

- If you care about influence among global open-source developers, Qwen and DeepSeek are still the strongest names.

- If you care about the speed of technical catch-up, players such as Kimi, GLM, MiniMax, StepFun, and Xiaomi MiMo still deserve close attention.

Is DeepSeek still a “phenomenon-level” company?

Yes, but its importance no longer comes only from user scale. It also changed the entire market’s expectations around open reasoning models, distillation, and cost structure. Even if its consumer ranking moves around in the future, its technical brand effect will remain hard to ignore in the near term.

Will China’s big tech companies eventually wipe out all startups?

Big tech will continue to widen its structural advantages, but that does not mean startups have no room left. The condition is that they cannot keep building only the same kind of general assistant that large firms can also build. They need sharper differentiation in coding, agents, long context, multimodality, or enterprise delivery.

What is the most important thing to watch next in China’s LLM market?

I would focus on four questions:

- Who can get agents into high-frequency workflows for real, rather than leaving them at the demo stage?

- Who can turn open models into the default foundation for developers?

- Who can push models into more industry scenarios while staying compliant?

- Who can keep driving inference costs down under constrained compute conditions?

Further reading

- Reddit thread: The current state of the Chinese LLMs scene

- Qwen3 official blog

- Qwen3-Coder official blog

- Alibaba Cloud Model Studio: Deep Thinking docs (including qwen3.5-plus / qwen3-max)

- Alibaba Cloud Model Studio: Qwen3.5 vision docs

- DeepSeek-R1 official announcement

- MoonshotAI / Kimi-K2.5

- Zhipu AI: GLM-5 official docs

- MiniMax official news: MiniMax M2.7

- ByteDance Seed 2.0 official page

- Seed 2.0 Official Launch

- ByteDance-Seed GitHub organization

- ByteDance Seed OSS

- StepFun model overview

- Step 3.5 Flash model card

- Xiaomi MiMo-V2-Pro official page

- Xiaomi MiMo-V2-Omni official page

- QuestMobile 2025 AI application-layer report

- Alibaba Cloud Model Studio: Coding Plan overview

- Alibaba Cloud Model Studio: Claude Code docs

- Tencent Hunyuan product overview

- Tencent-Hunyuan/Hunyuan-A13B

- Tencent-Hunyuan-Large

- Baidu Qianfan model services page

- ERNIE 5.0 Preview news

- PaddlePaddle/ERNIE on GitHub

- Baidu Qianfan Coding Plan

- Quickly deploy OpenClaw

- Zhipu AI: Claude Code docs

- Zhipu AI: GLM Coding Plan FAQ

- Kimi K2: stronger coding, faster API

- Kimi Playground: tool-use capabilities

- CAC: filing announcement for generative AI services in 2025

- China.gov.cn / Xinhua: 515 million generative AI users in China as of June 2025

- NVIDIA fiscal Q1 2026 results

Source Notice

This article is published by merchmindai.net. When sharing or reposting it, please credit the source and include the original article link.

Original article:https://merchmindai.net/blog/en/post/china-llm-landscape-2026