What Was Claude Code's 'Source Leak' Really About?

On March 31, 2026, Claude Code sparked a source leak debate after an npm package shipped with a source map. This article traces the timeline, reactions on Hacker News and X, and what the incident actually exposed.

What Was Claude Code's "Source Leak" Really About?

On March 31, 2026, discussion about a Claude Code source leak suddenly exploded across X and Hacker News. The trigger was simple enough: people noticed that Anthropic had shipped Claude Code to npm with a source map included, which made it possible to recover original TypeScript source, internal prompts, feature flags, and some unreleased functionality.

At first glance, this looked like a familiar "major AI company slips up" story. But reducing it to a bit of gossip about source code being exposed misses the more interesting part. What surfaced here was not just code. It was a view into an AI tooling company's release process, product roadmap, defensive thinking, and the unease users already feel about agent-style tools.

What made the story spread so quickly was not just that the code could be mirrored. People wanted to know what Anthropic had hidden inside the product, where Claude Code was heading next, and what its internal assumptions looked like once the outer shell was stripped away.

Timeline: How the Story Unfolded

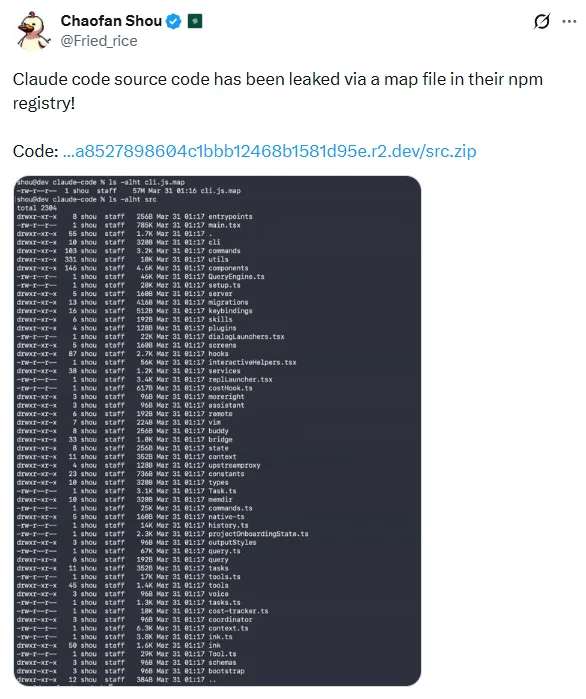

According to the public timeline captured by XCancel, Chaofan Shou (@Fried_rice) posted on March 31, 2026 at 08:23 UTC that Claude Code's source had been exposed because the npm package included a source map, and linked to a src.zip archive.

From there, the discussion split into two tracks.

The first was verification. A lot of developers did not react with excitement so much as suspicion: was this really recoverable source, a readable source map, or just a pile of debug symbols? Before long, several unofficial mirrors appeared on GitHub. One README explicitly said the code had been extracted from cli.js.map in the npm package @anthropic-ai/claude-code, pointing to the widely discussed v2.1.88 release.

The second track was closer to treasure hunting. Once readable source was organized into mirror repositories, the conversation moved beyond whether there had been a leak at all and toward what could be learned from it. People started combing through the repository with grep, Claude Code, and a lot of manual reading, looking for internal switches, system prompts, and unreleased features. Repeatedly mentioned items in the Hacker News thread included:

- An unreleased

assistant modeunder the codename kairos - A companion or ASCII-pet style feature referred to as Buddy System

- A supposed Undercover mode for stripping Anthropic traces from open-source contributions

- Hidden anti-distillation related settings, reportedly involving fake tool definitions meant to pollute scraped data

By the time I checked the thread, it had already climbed to the Hacker News front page with hundreds of points and well over two hundred comments. At that stage, this was no longer just a story about an npm package shipping with a map file. It had turned into a public inspection of what Claude Code actually is, and what Anthropic has been quietly building around it.

First, What This Was Not

This is the part most likely to get blurred by dramatic headlines.

Quite a few people on Hacker News asked whether the leak involved Claude or Opus model weights. It did not. What people were looking at was the implementation detail of Claude Code as a CLI and agent harness: the product layer that assembles prompts, calls tools, manages sessions, and orchestrates workflows in the terminal.

That matters for two reasons:

- Anthropic's core model capability did not directly spill out because of this incident.

- The real value exposed here sits less in parameters than in product operations.

That is why so many reactions converged on the same point. The embarrassing part was not that "people can now copy Claude." It was that Anthropic's internal prompts, feature flags, experimental roadmap, and strategic mechanisms were suddenly open to public inspection.

If you have been following Anthropic's broader arguments around distillation and capability copying, that reversal is especially striking. We previously covered how model outputs can become training material for competitors. This incident suggested the next layer of valuable intelligence may be the agent shell itself.

The Main Reaction Buckets

The comments on Hacker News and X were wide-ranging, but they mostly fell into four recognizable buckets.

1. The engineering-failure view

The most common reaction on X was not security analysis but ridicule. For many developers, the shocking part was not that source could be recovered. It was that a leading AI coding tools company had apparently shipped source maps to npm in the first place.

The mood behind that reaction was straightforward:

- This was not some sophisticated exploit chain.

- It looked more like a CI/CD or packaging mistake.

- It spread so quickly because it clashed with Anthropic's image of engineering rigor.

That is also why some people immediately connected the episode to "vibe coding." The line is not entirely fair, but it travels well because the narrative is so clean: the company selling coding agents could not lock down its own release pipeline.

2. The roadmap-leak view

Another group cared much less about code quality than about product direction. Their interest was not whether Claude Code contained some magical trick. It was whether the code revealed what Anthropic had been experimenting with.

That included questions such as:

- How far Anthropic planned to push assistant-style agent modes

- How it thought about AI-authored open-source contributions

- Whether anti-distillation mechanisms were already built into the product layer

- How companion, assistant, review, and adjacent features were being organized internally

The value here is not that someone can instantly fork a stronger Claude Code. It is that competitors, researchers, and users all get an earlier look at Anthropic's direction of travel.

3. The privacy-and-ethics view

If the source map mistake triggered jokes, the parts that made some developers uneasy were the behavioral assumptions they believed they saw in the extracted code.

Two clusters of discussion came up repeatedly on Hacker News:

- References to negative-emotion or profanity regexes, which some users interpreted as signs that Claude Code may track frustrated prompts for feedback or analysis

- Debate around Undercover mode, because community-circulated prompt fragments made it sound less like simple metadata cleanup and more like an effort to make AI-written contributions read as though they were authored by a human developer

That part needs care. What the public has right now is the community's reading of extracted code and prompt fragments, not a full official explanation from Anthropic. Still, the discomfort is real because it touches two sensitive questions:

- How exactly is my interaction data being analyzed?

- Should AI-generated work be designed to present itself as human-authored?

Those concerns fit into a larger pattern. More and more of the debate around agent tools is no longer about whether the model gets an answer wrong. It is about whether the tool performs actions, leaves traces, and represents itself in ways users did not explicitly sign up for.

4. The demystification view

There was also a cooler-headed camp that tried to shrink the story back down.

Their point was simple: Claude Code is still a terminal agent shell. However popular it is, the real ceiling on the experience still comes from the underlying model, tool use quality, product services, and iteration speed, not from one CLI repository.

That view carries two implications:

- Seeing the code does not mean recreating Claude's capability.

- But it does reduce the mystique around closed-source agent shells.

In that sense, the incident felt less like a catastrophic breach and more like demystification. It reminded people that many AI agent products are not powered by mysterious machinery at the outer layer. They are built from software engineering, prompt orchestration, tool wiring, and a long list of product judgments.

What This Actually Exposed

If you only read headlines, the whole thing becomes "Anthropic source leak." A more precise description would be this:

Anthropic appears to have exposed part of Claude Code's internal implementation and product intent through a release artifact handling mistake.

What matters more than the word "leak" is the set of realities the incident brought into view.

1. Source maps are now part of the product attack surface

In the traditional frontend world, leaking a source map often looks like a careless configuration issue. In agent products, a source map can expose:

- System prompts

- Internal feature flags

- Telemetry and risk-control logic

- Unreleased feature codenames

- Product strategy assumptions

In other words, build artifacts are now intelligence surfaces. That should be a warning for anyone shipping agents, browser extensions, IDE tools, or CLI products.

2. The fragile layer is often the product shell, not the model

This incident did not leak model weights, and it did not instantly erase Claude's competitive edge. What it did show is that as model companies turn into product companies, the easiest place for things to break may not be training at all. It may be the ordinary-looking software delivery layer wrapped around the model.

Claude Code has become one of Anthropic's most visible products, so developers naturally hold it to a very specific standard. The backlash was amplified because the failure landed in exactly the wrong place:

If you sell developer workflow tools, developers will judge you by developer workflow standards.

3. Closed-source CLI mystique is getting harder to maintain

One more reality surfaced in the discussion: more agent CLIs are open source now, or at least close enough that the shell around the model is no longer especially mysterious. In that environment, keeping a terminal agent as a black box offers diminishing returns and creates extra reputational risk when something leaks anyway.

That is why my final take on this story is fairly restrained: it feels more like brand demystification than a technically devastating leak.

What Anthropic really needs to repair is not only a packaging mistake. It is a crack in developer perception: the realization that even one of the most advanced AI coding tool teams can still make the kind of mistake that makes every engineer wince.

Closing Thought

If the whole Claude Code incident has to be reduced to a single line, it is probably this:

What leaked was not Claude's brain. It was Anthropic's control panel.

That is why the response has been such a strange mix of mockery, curiosity, and unease. People laughed, then read the code closely, then started worrying about the hidden modes and prompts they found along the way.

The broader lesson is that competition in AI coding tools is no longer just about model benchmarks. It is also about:

- Who runs the cleaner release pipeline

- Who behaves more transparently at the product layer

- Who can balance automation with trust

What matters next is not whether more mirror repositories appear. It is whether Anthropic chooses to explain these internal mechanisms directly, and whether it can rebuild developer confidence in Claude Code as an engineering product.

Related Resources

Source Notice

This article is published by merchmindai.net. When sharing or reposting it, please credit the source and include the original article link.

Original article:https://merchmindai.net/blog/en/post/claude-code-source-map-leak-timeline-reactions