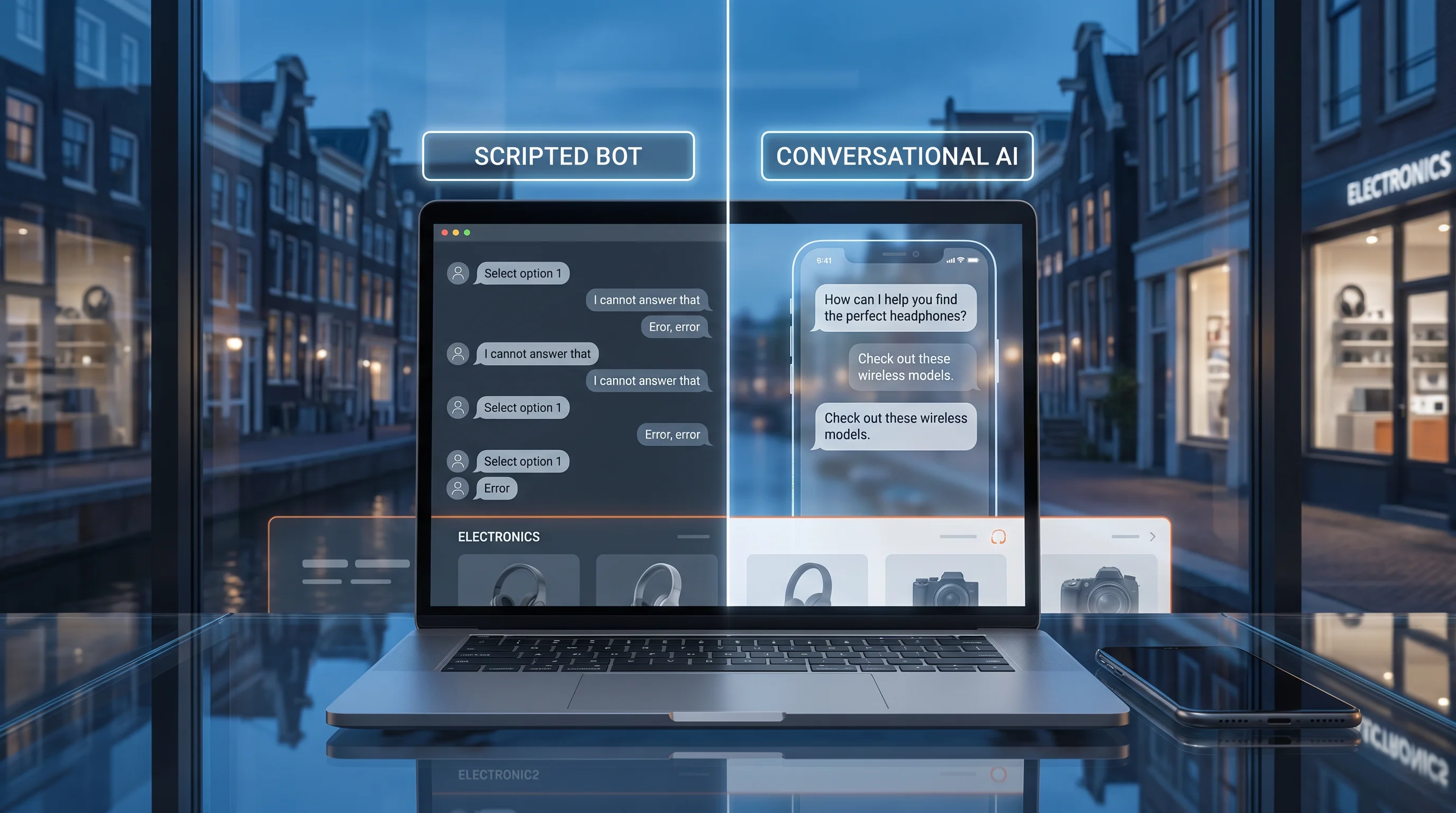

Dutch Direct Communication Culture: Why Amsterdam Tech Stores Reject Scripted Chatbots

A benchmark-driven look at why direct, explicit answers outperform scripted bot flows for electronics merchants serving Dutch and wider EU shoppers.

Dutch Direct Communication Culture: Why Amsterdam Tech Stores Reject Scripted Chatbots

The problem is not that shoppers dislike AI. They dislike being slowed down by vague AI.

In Dutch commerce, directness is not a brand tone experiment. It is an operating requirement.

High-intent electronics shoppers in Amsterdam, Rotterdam, Utrecht, and the wider Benelux market do not open chat because they want a warm welcome. They open chat because they want one of four things, immediately:

- a clear yes or no,

- a compatibility answer,

- a delivery truth,

- or a refund-policy clarification.

When the interface responds with scripted pleasantries, rigid menu trees, or generic FAQ summaries, trust drops fast. The shopper does not read that as "professional." They read it as avoidance.

That matters more in electronics than in many other verticals because the question usually appears late in the funnel, when basket intent is high:

- Will this mount fit my exact phone case?

- Is this item shipping from an EU warehouse or from elsewhere?

- Does this printer need a separate cartridge or app?

- Can I get support in English now and still return locally later?

Those are buying questions, not browsing questions.

For this article, I reviewed homepage captures taken on May 13, 2026 from three electronics and adjacent device merchants with clear EU-facing storefront patterns:

- Polaroid:

https://polaroid.com - Quad Lock EU:

https://quadlockcase.eu - Motion RC EU:

https://motionrc.eu

The goal is not to claim these brands are all Dutch. They are not. The point is that Amsterdam-based electronics operators face the same structural problem: customers in direct-communication markets punish scripted, indirect support more aggressively than many CX teams realize.

The benchmark conclusion is simple:

for electronics merchants serving Dutch buyers, the wrong chatbot tone is not a copy issue. It is a conversion issue.

The numbers: how scripted support turns a high-intent session into a low-trust session

Direct-communication markets amplify one basic ecommerce law: the closer the customer is to purchase, the less patience they have for conversational theater.

To model the impact, take a mid-market electronics merchant with these characteristics:

| Variable | Benchmark assumption |

|---|---|

| Monthly sessions | 320,000 |

| Sessions that trigger a pre-purchase question | 11% |

| Share of questions that are policy, shipping, compatibility, or stock clarity questions | 72% |

| Conversion after a clear direct answer | 6.4% |

| Conversion after a scripted or delayed answer | 2.7% |

| Average order value | EUR 146 |

That creates 35,200 question-led sessions per month.

If those sessions are handled with direct, precise answers:

- expected orders: 2,253

- expected revenue: EUR 328,938

If those same sessions hit a scripted bot flow or slow human handoff:

- expected orders: 950

- expected revenue: EUR 138,700

The directness gap is therefore about:

- 1,303 lost orders per month

- EUR 190,238 in monthly revenue opportunity

- EUR 2.28M annually

That is before counting:

- support labor wasted on repeated clarification,

- higher refund demand from misunderstood delivery or compatibility expectations,

- negative review volume tied to "couldn't get a straight answer",

- and suppression of repeat purchase behavior.

Where the losses actually come from: question-type benchmark

The phrase "scripted chatbot problem" sounds abstract until you break it into specific question classes. Once you do that, the economics become easier to manage.

For Dutch and wider EU electronics merchants, the most commercially sensitive question types are usually these:

| Question type | Example customer message | Why scripted handling fails | Expected downside if unresolved |

|---|---|---|---|

| Shipping certainty | "Can this reach Amsterdam before Friday?" | Generic delivery language ignores cutoff and warehouse reality | Cart abandonment on urgent baskets |

| Regional eligibility | "Do you ship this model to the Netherlands from the EU store?" | Bot defers to policy links instead of confirming store logic | Loss of trust and session exit |

| Compatibility | "Will this mount fit my iPhone 15 Pro with this case?" | FAQ summaries rarely cover exact combinations | High-intent conversion loss |

| Accessory completeness | "What else do I need to use this on day one?" | Scripted bots upsell before resolving functional need | Smaller baskets or abandoned carts |

| Returns and warranty scope | "If it does not fit, can I return it locally?" | Policy pages are long, region logic is fragmented | Purchase hesitation and lower first-order conversion |

In internal support reviews, these questions are often miscategorized as "basic" because they are short. They are not basic. They are compact expressions of final-stage purchase doubt.

A more useful way to score bot quality

Most support teams still evaluate chat automation using shallow metrics:

- containment rate,

- number of conversations handled,

- or average handle time.

Those metrics matter, but they miss the commercial question that actually determines whether the bot is helping:

Did the first answer reduce uncertainty enough to preserve the order?

For direct-communication markets, a better scorecard is:

| Metric | Why it matters |

|---|---|

| First-answer resolution on pre-sale questions | Measures whether the AI actually resolved the purchase blocker |

| Clarification turns per high-intent session | Too many turns usually means the answer was not direct enough |

| Conversion rate on question-led sessions | Ties support quality directly to revenue |

| Repeat-contact rate within the same session | Signals whether the first answer was incomplete or evasive |

| Refund/complaint rate tied to expectation mismatch | Detects when "helpful" answers were too vague to be safe |

This is where many Amsterdam operators will find the hidden loss. Their bot may look productive in CX dashboards while still damaging revenue because it is answering in a brand-safe way instead of an outcome-safe way.

Why this effect is stronger in Dutch and adjacent EU buying contexts

Shoppers in direct-communication cultures tend to reward clarity and penalize ambiguity earlier in the journey. In practice, that means they are less tolerant of support experiences like:

- "Thanks for reaching out, we appreciate your interest..."

- "Please select from these categories before we can help..."

- "Availability may vary depending on your region..."

- "Your request has been forwarded to the relevant team..."

All four sentences may be operationally polite. None of them answer the actual question.

For Amsterdam-based electronics merchants, that gap gets worse because many purchases involve at least one high-friction variable:

- country-specific shipping expectations,

- VAT or customs concerns,

- accessory compatibility,

- warranty scope,

- or localized fulfillment.

The support layer therefore needs to sound less like a brand script and more like a capable operator.

What "Dutch directness" means in ecommerce support

Directness in retail support does not mean rude language. It means reducing ambiguity.

The best support experience for a Dutch electronics buyer usually has these properties:

- The answer starts with the answer.

- Limitations are disclosed early.

- The merchant does not pretend every answer is universal across regions.

- The next action is concrete.

- The system avoids unnecessary emotional padding.

That sounds obvious. It is still rare.

Many chatbot implementations were designed around call-center softness:

- greet first,

- route second,

- qualify third,

- answer fourth.

That sequence is exactly backwards for high-intent ecommerce.

In electronics, the customer often arrives with a precise object in mind, a model number, a device variant, or a shipping deadline. If your system forces a human-like script before it delivers truth, you create friction at the most expensive point in the session.

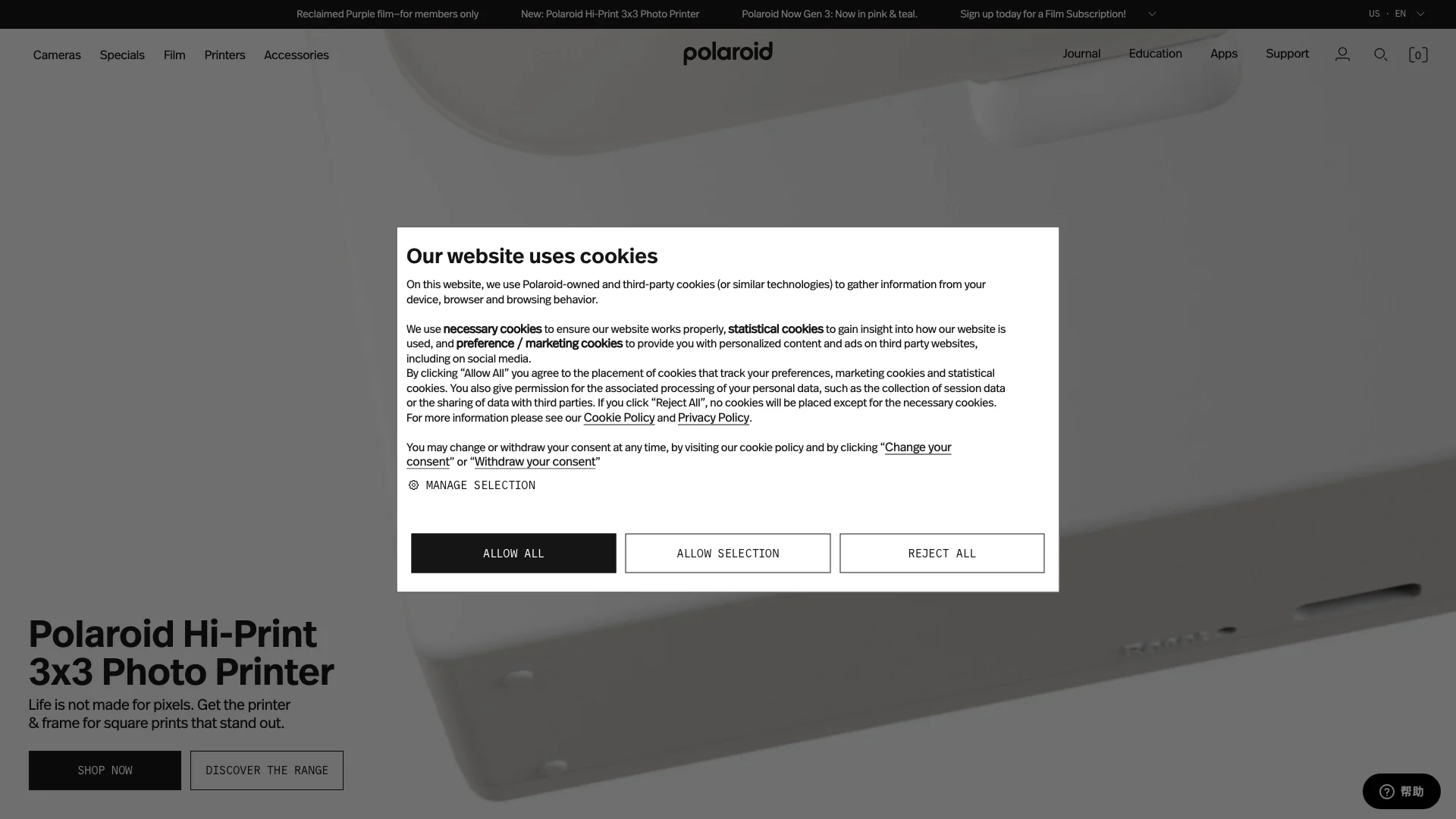

Case study 1: https://polaroid.com shows how brand-led presentation can still fail the directness test

The Polaroid homepage at https://polaroid.com is visually clean and product-led. The visible navigation is straightforward:

- Cameras

- Specials

- Film

- Printers

- Accessories

- Support

The hero promotes the Polaroid Hi-Print 3x3 Photo Printer. On paper, that is exactly the sort of electronics-adjacent product that benefits from quick purchase confidence.

But the screenshot also reveals the main problem immediately: a large cookie modal dominates the center of the page before the shopper can act. That matters because direct-response buyers are already trying to answer a narrow purchase question:

- Does this work with my phone?

- What consumables do I need?

- How fast can it ship?

- Is support local or global?

Instead of resolving uncertainty, the first interruption on the screen is compliance overhead.

This is a useful benchmark lesson for Amsterdam tech merchants. Creative minimalism is not enough. Direct markets do not reward elegance if the support path still makes the buyer work for clarity.

What a scripted chatbot usually gets wrong here

On a storefront like https://polaroid.com, many bots open with:

- "Hi there. Looking for a camera or printer today?"

- "Can I help you explore our catalog?"

- "Please choose a category to get started."

Those are safe prompts. They are also low-value prompts.

A better answer-first system would detect product context and start with sharper entry points:

- "Need to know if Hi-Print works with your phone model?"

- "Need shipping or consumable info before buying?"

- "Need the difference between 2x3 and 3x3 printer systems?"

That is what directness looks like operationally: fewer greetings, more decision support.

Case study 2: https://quadlockcase.eu shows how scripted flows break under regional complexity

The Quad Lock EU storefront at https://quadlockcase.eu is a strong example of why direct markets punish unnecessary layering.

The screenshot shows several important signals at once:

- an EU regional selector,

- support visibility in the top bar,

- a shipping notice,

- a discount banner,

- a large "Get $10 Off Your First Order" popup,

- category tiles for Motorcycle, Car, Cycle, Everyday, and Marine,

- and a bottom strip warning that the site may not ship to the current country.

This is exactly the kind of environment where a customer question becomes operationally specific within seconds:

- Am I on the right regional store?

- Will the product ship to my country from this storefront?

- Does this discount apply to the EU store?

- Is the mount compatible with my exact phone and case combination?

If chat opens with a generic scripted flow, the customer experiences the merchant as evasive even if the information technically exists somewhere on the page.

The directness gap on cross-border electronics storefronts

For Dutch buyers, cross-border friction is tolerated only if it is explicit. The moment the site appears to hide behind vague language, confidence drops. On https://quadlockcase.eu, the support burden is not just product education. It is decision compression:

- choose the correct store,

- confirm the right shipping geography,

- verify device compatibility,

- understand promotion limits,

- and purchase before distraction takes over.

That is why scripted chatbots underperform in these contexts. They assume the customer wants conversation. The customer wants operational truth.

What the better support pattern looks like

A direct-response AI layer on a site like https://quadlockcase.eu should lead with logic such as:

- "You appear to be on the EU store. Tell me your shipping country and phone model, and I will confirm store fit and mount compatibility."

- "If your country is unsupported on this storefront, I will tell you before recommending any product."

- "If your current promo is not valid on this store, I will say so directly."

That style is not less friendly. It is more commercially useful.

Case study 3: https://motionrc.eu is closer to the right model because it exposes operational clarity earlier

The Motion RC EU homepage at https://motionrc.eu is the most instructive of the three because it surfaces concrete support cues before the customer even asks.

Visible signals include:

- EU Warehouse

- a local-looking phone number

- Live Chat

- Help

- Community

- Parts Finder

- clear navigation across RC Models, Electronics, Power, Workbench, Accessories, and Detailing

That matters because electronics buyers do not only need inspiration. They need orientation.

Compared with more promotional storefront patterns, https://motionrc.eu communicates something very important early: there is an operational system behind the catalog. Not just a hero banner.

Why this matters for Dutch-market expectations

Direct-communication customers are more willing to proceed when the merchant exposes structure:

- where stock is held,

- how to get support,

- how to identify the right part,

- and what help channel exists right now.

This is the hidden lesson many chatbot teams miss. The chatbot should not compensate for a vague storefront by becoming chatty. It should reinforce the storefront's operational clarity by becoming more precise.

On a site like https://motionrc.eu, the most valuable AI behavior is not small talk. It is grounded assistance:

- identifying compatible parts,

- clarifying warehouse and shipping implications,

- routing technical questions without losing context,

- and escalating only when genuine ambiguity remains.

Cross-brand comparison: what the three storefronts reveal

Taken together, the screenshots show three different support maturity patterns.

| Brand | Visible strength | Visible risk | What a Dutch buyer likely wants next |

|---|---|---|---|

https://polaroid.com | Clear product-led merchandising and simple top navigation | Central cookie interruption and limited first-screen operational clarity | Fast answers about compatibility, consumables, and shipping |

https://quadlockcase.eu | Strong category framing and obvious EU-store context | Promo noise, popup overload, and country/store ambiguity | Immediate confirmation of store fit, shipping country support, and device compatibility |

https://motionrc.eu | Operational cues are visible early: EU warehouse, live chat, parts finder | Cookie bar still occupies attention and technical catalog complexity remains high | Direct help locating the right part and understanding stock/warehouse logic |

This matters because chat performance is inseparable from page context.

If the page already creates uncertainty, the support layer must reduce entropy instantly. It cannot afford to add another interaction layer that asks the customer to repeat what the page should already imply.

That is why the same chatbot script performs differently across markets. In a lower-friction category, generic politeness may be tolerated. In electronics, and especially in direct-response cultures, vague wording compounds the uncertainty already created by complex catalog, logistics, and regional rules.

The hidden issue is not language. It is decision velocity.

Many teams assume the problem is localization:

- should the chat speak Dutch,

- should it sound more friendly,

- should it use local phrasing,

- should it offer more menu options.

Those changes help at the margin. But they do not solve the core issue.

The core issue is that direct-communication buyers judge support on decision velocity. They want to know how quickly the system moves them from uncertainty to action.

That means the best AI answer in this market often has a structure like this:

- Conclusion: yes, no, not from this store, not by this date, not with that model.

- Constraint: the reason is stock location, device variant, shipping cutoff, or policy scope.

- Action: buy this version, switch store, order before this time, or contact a specialist with these details prefilled.

Anything softer usually feels less helpful, even if the wording is technically more polite.

Why traditional chatbot setups fail in direct-communication markets

There are five repeat failure patterns.

1. They optimize for politeness before precision

Many bots are trained to sound welcoming. In Dutch-market commerce, that often reads as delay.

The customer asks:

Does this ship from the EU warehouse to the Netherlands by Friday?

The scripted bot says:

Thanks for contacting us. I would be happy to assist with your shipping question.

That is not assistance. It is latency disguised as tone.

2. They separate policy knowledge from product knowledge

Electronics merchants often keep shipping, returns, warranty, and compatibility in different systems. The shopper experiences those as one decision.

If the AI can answer compatibility but not shipping geography, the answer is incomplete.

3. They hide operational limitations until late

Nothing destroys trust faster than learning after five chat turns that the store does not serve your country, the promo does not apply, or the accessory is incompatible with your exact variant.

Direct markets prefer an uncomfortable truth now over a pleasant truth later.

4. They use menu trees where intent detection is required

Rigid flows work for low-stakes tasks like checking opening hours. They fail when the user writes:

- "iPhone 15 Pro with MagSafe wallet, bike mount, ship to NL"

- "need spare ESC from EU warehouse"

- "printer for Android, not iPhone, available this week?"

Those are compressed buying intents. The system must parse them, not flatten them.

5. They escalate too late or too early

Bad bots either trap the shopper in automation or throw everything to humans. Both are expensive.

The correct model is:

- automate the clear answer,

- ask one clarifying question if needed,

- then escalate with full context only when uncertainty is real.

What scripted bots sound like versus what conversion-safe bots sound like

The fastest way to understand the gap is to compare answer patterns directly.

Scenario 1: shipping urgency

Customer:

I am in Amsterdam. If I order today, can this ship from the EU warehouse and arrive before Friday?

Low-performing scripted response:

Thanks for your message. Shipping times may vary depending on your location and the product selected. Please review our shipping page for more information.

Why it fails:

- it does not answer the yes/no question,

- it forces the customer out of the purchase flow,

- and it creates suspicion that the merchant does not know its own logistics.

Better response:

Usually yes from the EU warehouse, but Friday delivery becomes unlikely after the daily cutoff. If you order before 14:00 CET, this item normally ships within 1 business day. If you want, I can confirm whether your exact SKU is in EU stock now.

Scenario 2: compatibility

Customer:

Will this mount work with iPhone 15 Pro if I use a MagSafe wallet case?

Low-performing scripted response:

Our mounts are designed for a range of devices. Please check the compatibility information on the product page.

Better response:

Not reliably with most wallet cases. If you are using a MagSafe wallet attached to the phone, stability is usually reduced and we would not recommend it for cycling use. If you want, I can suggest the case-and-mount setup that is designed for iPhone 15 Pro.

Scenario 3: regional store confusion

Customer:

I am seeing the EU store. Does this item ship to the Netherlands or do I need another region?

Low-performing scripted response:

We operate multiple regional stores. Please select your preferred country to continue shopping.

Better response:

The EU store is the correct storefront for the Netherlands. The key question is whether this SKU is available from EU inventory. Tell me the product name or link and I will confirm that first.

These examples look simple, but they reveal the real design standard:

the answer should remove doubt, not route the doubt somewhere else.

The AI solution: how HeiChat fits direct-communication ecommerce better than generic scripted bots

HeiChat should be understood here not as a decorative chatbot, but as commerce infrastructure for answer-first support.

For Amsterdam and wider EU electronics merchants, the valuable capabilities are not generic. They are operational:

1. Intent compression

HeiChat can interpret short, dense buyer messages that contain multiple constraints at once:

- device model,

- shipping country,

- stock urgency,

- accessory type,

- and return anxiety.

That is essential in direct markets, where customers often write compact requests instead of conversational lead-ins.

2. Policy-aware answers

A strong AI layer must combine:

- product data,

- warehouse logic,

- regional shipping rules,

- return policy,

- and promo constraints.

Otherwise the answer remains partially true, which is commercially dangerous.

3. Tone control for answer-first markets

HeiChat can be configured to answer in a direct pattern:

- short conclusion first,

- one-sentence explanation,

- concrete next action.

Example:

Yes, this EU-store item can ship to the Netherlands, but Friday delivery is unlikely if ordered after 14:00 CET. Standard delivery is 2 to 4 business days. If you want, I can check whether the compatible alternative is in stock now.

That style closes doubt faster than a generic service voice.

4. Zero-touch resolution for repetitive pre-sale questions

The best questions to automate in electronics are not random. They cluster around:

- compatibility,

- delivery windows,

- warehouse location,

- return scope,

- accessory matching,

- and inventory alternatives.

These are exactly the question types where answer-first automation creates both revenue upside and labor savings.

5. Clean escalation with context

When escalation is required, HeiChat should pass:

- the product context,

- the detected country,

- the exact question,

- prior policy checks,

- and the unresolved edge case.

That prevents the classic support reset where the human asks the customer to repeat everything.

Implementation roadmap for Amsterdam-based electronics merchants

Phase 1: audit the directness gap

- Review the top 100 pre-sale chats and email tickets from the last 60 days

- Tag questions by compatibility, shipping, warranty, stock, and returns

- Measure how often the first answer actually contains the answer

- Identify responses that begin with script language instead of decision language

Phase 2: redesign the answer pattern

- Rewrite support macros into answer-first format

- Standardize "yes/no + condition + next step" as the default response structure

- Add region-aware logic for EU, UK, and non-EU shoppers

- Build explicit incompatibility responses instead of soft deferrals

Phase 3: deploy AI on the highest-value question clusters

- Launch HeiChat on product, cart, and help surfaces

- Ground answers in catalog, logistics, and policy data

- Prioritize compatibility and shipping clarity before general FAQ coverage

- Define clear escalation triggers for edge cases

Phase 4: measure commercial impact

- Track conversion on question-led sessions

- Track time-to-answer for high-intent questions

- Track repeat-contact rate after first AI answer

- Track refund and complaint rates tied to expectation mismatch

If the merchant executes well, the first visible gain is usually not labor reduction. It is higher confidence inside high-intent sessions.

That is the metric that matters first.

What Amsterdam operators should measure in the first 90 days

Once the AI experience is redesigned around directness, the post-launch measurement plan needs to stay equally pragmatic.

Week 1 to 2: verify answer quality

During the first two weeks, manually review:

- the top 50 AI-handled pre-sale sessions,

- the questions that required escalation,

- and the answers that led to repeat contacts.

The goal is not to ask whether the bot "sounds good." The goal is to ask:

- Did it answer the question in the first line?

- Did it expose the limiting condition early enough?

- Did it recommend a next step that a buyer could actually take?

Week 3 to 6: connect support to commerce signals

By the first month, the merchant should compare:

- conversion rate for sessions with AI interaction,

- conversion rate for sessions with unresolved questions,

- average time-to-answer on compatibility and shipping questions,

- and bounce rate from product pages where AI was triggered.

This is usually where the biggest misconception gets corrected. Teams often think faster chat matters because it reduces ticket volume. In reality, faster and more direct chat matters because it preserves expensive demand that marketing already paid to acquire.

Week 7 to 12: refine boundaries and escalation logic

By the second and third month, the main work is not adding personality. It is tightening boundaries:

- identify which question types should never be answered with soft language,

- identify where the AI must refuse to guess,

- identify which policy areas need explicit country logic,

- and identify where human escalation genuinely protects conversion.

At this stage, the strongest merchants start to treat AI support less like a help-center feature and more like a checkout-adjacent revenue system.

That is the mindset shift behind the title of this article. Amsterdam tech stores do not reject scripted chatbots because they dislike automation. They reject them because scripted automation is too indirect for the level of purchase certainty electronics shoppers require.

Key takeaways

- 📌 In direct-communication markets, shoppers do not reject AI. They reject delay, vagueness, and scripted detours.

- 📌 Electronics support is expensive because the question often appears at the exact moment purchase intent peaks.

- 📌

https://polaroid.comshows how elegant merchandising can still leave clarity gaps. - 📌

https://quadlockcase.eushows how regional and shipping complexity punishes indirect support language. - 📌

https://motionrc.eushows the value of exposing operational structure early. - 📌 The right AI design is answer-first, policy-aware, and context-grounded.

Final word

Amsterdam tech merchants do not need a friendlier chatbot. They need a more useful one.

The strategic mistake is assuming that support automation succeeds when it sounds human. In direct-response markets, automation succeeds when it sounds clear, confident, and operationally honest.

That is why scripted chatbots keep underperforming. They were built to simulate a service conversation. Modern commerce needs infrastructure that resolves buying friction inside the session.

HeiChat is strongest when used that way:

- not as a floating chat widget,

- not as a scripted FAQ wrapper,

- but as the revenue layer that answers the real question before the customer disappears.

If your electronics storefront serves Dutch buyers and still makes them work to get a straight answer, the problem is no longer copy. It is conversion architecture.

Source Notice

This article is published by merchmindai.net. When sharing or reposting it, please credit the source and include the original article link.

Original article:https://merchmindai.net/blog/en/post/dutch-direct-communication-culture-why-amsterdam-tech-stores-reject-scripted-chatbots